Building a Policy-First System for Proxy Voting and Governance Analysis

Proxy voting is one of the most structured and observable ways institutional shareholders exercise governance rights. Yet the operational cost of applying voting policies consistently across thousands of ballot items remains high.

As Professor Lucian Bebchuk and others have emphasized, institutional ownership concentrates shareholder power in intermediaries. Voting authority is exercised through layered delegation: beneficiaries to asset owners, asset owners to asset managers, and managers to internal stewardship teams and (often) external research providers. Concentration does not eliminate agency costs; it relocates them. The practical costs of implementing and monitoring voting policy therefore shape whether shareholder power is exercised effectively.

At Proxywise AI, we are building a policy-first proxy voting and governance analysis system designed to make proxy voting more transparent, consistent, and auditable. This post highlights the core design choices behind the system and what we are learning from two early-stage pilots in proxy season 2026 – one with an asset owner and one with a large asset manager – running in parallel against existing workflows for testing and validation.

Why Proxy Voting Is Well-Suited to AI

Proxy voting has an unusually clear structure:

- Inputs: standardized corporate disclosure (especially proxy statements and related filings).

- A policy layer: voting guidelines that can be expressed as criteria, thresholds, and exceptions.

- Outputs: structured decisions—FOR, AGAINST, ABSTAIN/WITHHOLD—supported by rationale.

At scale, most of the volume is policy application across recurring proposal types (e.g., director elections, say-on-pay, auditor ratification, shareholder proposals). The governance stakes, however, often concentrate in a smaller set of cases that are novel, contested, or context-dependent. This is the practical “90/10 problem”: while most items are straightforward, the “last 10%” require judgment and careful documentation.

That is why the key design question is not whether AI can produce a recommendation, but whether it can do so in a way that strengthens governance. For AI-assisted proxy voting to be governance-enhancing, traceability and explainability must be core standards: recommendations should be linked to specific policy criteria and supporting evidence, and overrides should be documented and reviewable.

How Proxywise AI Works

Proxywise AI is designed as a policy-first workflow that produces not only recommendations, but a record of why those recommendations follow rationally from policy and evidence.

1) Extracting ballot items and the relevant evidence.

The system identifies all ballot items from proxy materials, classifies them by type, and retrieves the sections of disclosure most relevant to the policy criteria at issue. It also incorporates supplementary filings (e.g., 10-K, 8-Ks) where important context often resides. It also has the ability to cross-reference information from other sources, such as those available on the web or embedded in notes from a user’s engagement history.

2) Turning policy into operational rules.

Institutional policies are typically written in prose. To apply them consistently at scale, they must be translated into structured elements – categories, criteria, thresholds, and exception logic. This translation from prose to structured rules is done by the Proxywise AI engine, which also surfaces ambiguity that can otherwise remain implicit, helping teams clarify definitions and edge cases.

3) Separating “evidence-first analysis” from the vote decision.

A deliberate guardrail is that analysis is conducted in two stages. The first stage focuses on policy relevance and evidence – what facts matter, what the research sources say, and which policy rules are implicated – without committing to a voting stance. Only in a second stage does the system synthesize that record into a recommendation. This separation is designed to reduce the risk of premature conclusions shaping evidence selection.

4) A structured pathway for exceptions.

When policy is ambiguous, conflicting, or context-dependent, or information is insufficient to make a recommendation, the system routes an item to REVIEW, with specific “points for review” to guide the decision-maker. Where needed, it can incorporate targeted external research and re-evaluate, while preserving the audit trail. Over time, these cases create a structured feedback loop for policy calibration—highlighting ambiguities, prompting tighter definitions and allowing institutions to evolve guidelines deliberately rather than through untracked exceptions. The goal is not to eliminate human judgment, but to ensure judgment is exercised where it belongs – mostly in the “last 10%” category – and documented when it occurs.

5) Producing institutional artifacts.

Stewardship requires outputs that can be reviewed internally, shared, and archived. The system can produce meeting-level summaries and a formatted, customizable report that assembles recommendations, rationales, key evidence, and (where relevant) supplemental research.

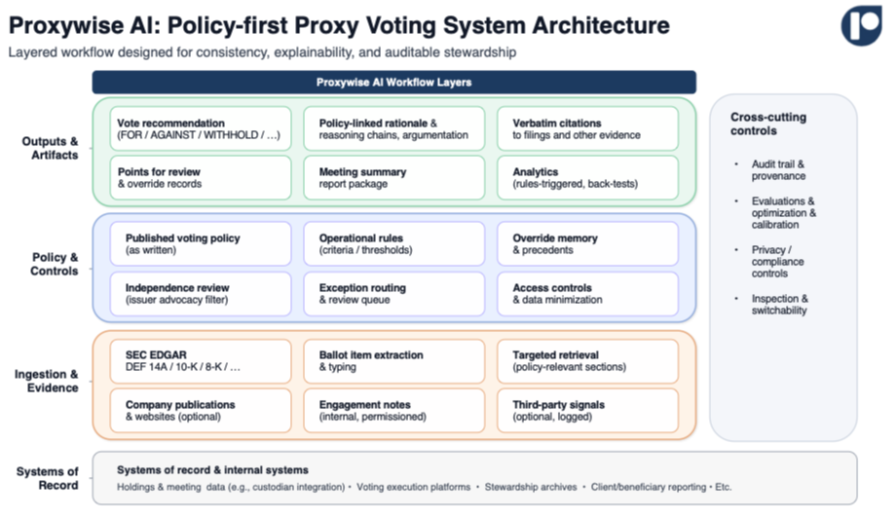

Figure 1: Proxywise AI Workflow (Layers, Controls, Outputs)

Auditability as a Core Output

Proxy voting is one of the few governance functions where outcomes can be continuously tested against observable inputs: policies, filings, realized votes and other research materials. Proxywise AI is built around that property.

Rule-level traceability.

For each ballot item, the system records which policy rules were applied and the directional stance they imply. This makes it possible to analyze which rules fire most frequently, which drive review outcomes, and where overrides cluster.

Citation-backed reasoning.

Key factual claims are supported by verbatim quotations from the underlying filings and, where relevant, other cited sources—allowing reviewers to verify what was relied upon without depending on paraphrase.

Override governance and precedents.

When a user confirms or overrides a recommendation, the system records the rationale. Overrides can also be saved as precedents for future similar cases (linked to rules), turning institutional judgment into a structured, reviewable input rather than an untracked exception. This also makes it easier for stewardship teams to review their proxy voting policy and suggest evidence-backed amendments.

Back-testing and calibration.

Proxy voting is unusually testable; Proxywise makes that testability practical. Users can compare recommendations against disclosed outcomes and evaluate performance across vote categories. Users can also compare how two different policies would vote on the same set of ballot items, making policy differences measurable.

The objective is to make policy compliance testable rather than aspirational.

A Note on Data Security & Confidentiality

Proxywise AI primarily processes public issuer and ballot data, structured into individual voting items and evaluated against client-specific policy rules. Any non-public information provided by clients, such as internal voting guidelines or meeting materials, is treated as strictly confidential, encrypted, access-restricted on a need-to-know basis. For organizations with stricter information-barrier or residency requirements, we can support dedicated single-tenant deployments, while defaulting to a secure multi-tenant architecture for most use cases.

When AI models such as GPT or Claude are used, queries run via enterprise APIs where customer data is not used for model training and is not retained or repurposed. All outputs remain fully traceable to the underlying ballot item and rule set, ensuring transparency, auditability, and data integrity.

Early Deployment and What We Are Learning

Proxywise AI’s methodology is being tested in two early-stage pilots in proxy season 2026 – one with an asset owner and one with a large asset manager – to run in parallel with existing processes. Three themes have emerged:

-

Validation depends on the “why,” not only on the vote.

Teams care as much about completeness, evidence quality, and correct policy mapping as they do about the voting recommendation. That is where trust is earned and errors are discovered. -

Encoding forces policy clarity.

Translating guidelines into operational criteria surfaces ambiguity that was previously resolved informally or even unknowingly. Making those decision points explicit is itself a governance benefit. -

Policy calibration is essential, especially for shareholder proposals.

The voting recommendations can skew in favor of shareholder proposals more than an institution’s historical voting record would suggest – often because of high-level policy language. This is remedied by adding examples, capturing overrides as rule-linked precedents, and back-testing to detect and correct bias by proposal and policy type. -

Exception handling is the real test.

Most items are straightforward. The system’s value is most visible in how reliably it identifies the true “last 10%,” routes those items for review, and supports disciplined decision-making and override documentation.

Implications

Lower implementation and oversight costs will make robust stewardship feasible for more institutions and can surface misalignment earlier in the delegation chain. If proxy voting becomes more system-mediated, accountability will partly depend on governance of the supporting infrastructure: data quality, validation practices, audit rights, and the practical ability to inspect and switch systems. These are governance questions, not merely technical preferences.

Looking ahead, we expect the stewardship ecosystem to become more differentiated, not less: more investor-specific policies, more explicit exception handling, and higher expectations for evidentiary support. Proxywise AI is built to support that future as an enabling layer: helping institutions implement their chosen policies consistently, concentrate human judgment where it is most decision-relevant, and reduce structural reliance on external proxy advisors by enabling independent, policy-driven ballot assessments. Analysis can be completed within hours of a filing going live, significantly faster than traditional review processes. At the same time, the system preserves a clear audit trail from disclosure to rule to vote. Done well, this strengthens stewardship without turning it into a black box: expanding coverage on routine items, improving discipline and quality on edge cases, and making it easier for beneficiaries, intermediaries, and issuers to understand what drives voting outcomes and how those outcomes evolve over time.

Legal Disclaimer:

EIN Presswire provides this news content "as is" without warranty of any kind. We do not accept any responsibility or liability for the accuracy, content, images, videos, licenses, completeness, legality, or reliability of the information contained in this article. If you have any complaints or copyright issues related to this article, kindly contact the author above.